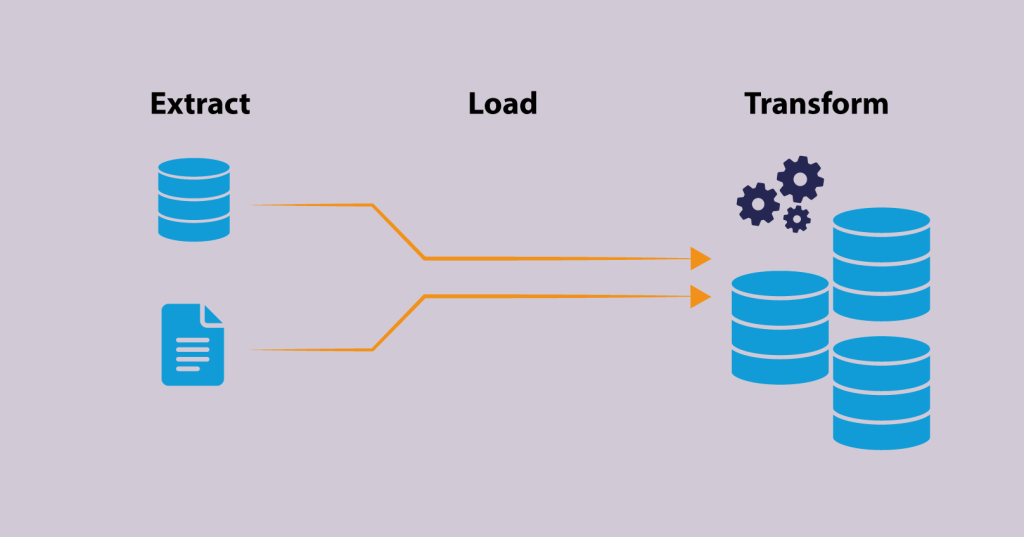

Extraction, Transformation, Loading

What is ETL?

ETL stands for Extract, Transform, Load, and refers to the process of extracting data from various sources, transforming it into a format suitable for analysis, and then loading it into a target database or data warehouse. The purpose of ETL is to provide a single, consolidated view of data from different sources for reporting and analysis purposes.

ETL (Extract, Transform, Load) is a crucial process in data management that involves extracting data from various sources, transforming it into a suitable format, and loading it into a target database or data warehouse.

Extract

The first step of the ETL process is to extract the data from various sources. This can include databases, text files, spreadsheets, or other sources. The data may be stored in different formats and structures, so it is important to identify the format and structure of each source before extraction.

Transform

Once the data has been extracted, the next step is to transform it into a suitable format. This process involves cleaning, normalizing, and transforming the data into a format that can be easily loaded into the target database. Data cleaning may involve removing duplicates, correcting errors, and filling in missing values. Data normalization involves converting the data into a standard format that can be easily analyzed.

Load

The final step of the ETL process is to load the transformed data into the target database or data warehouse. This step requires mapping the transformed data into the target database schema and ensuring that the data is loaded correctly. It is also important to validate the data to ensure that it is accurate and complete.

ETL is an important process in data management as it helps organizations to make sense of the vast amount of data they collect. ETL helps organizations to extract relevant information from their data, transform it into a usable format, and load it into a target database or data warehouse, allowing organizations to make informed decisions based on their data.

In conclusion, ETL is a crucial part of data management that helps organizations extract, transform, and load data from various sources into a target database or data warehouse. By automating the ETL process, organizations can save time and resources, and ensure that their data is accurate and usable.

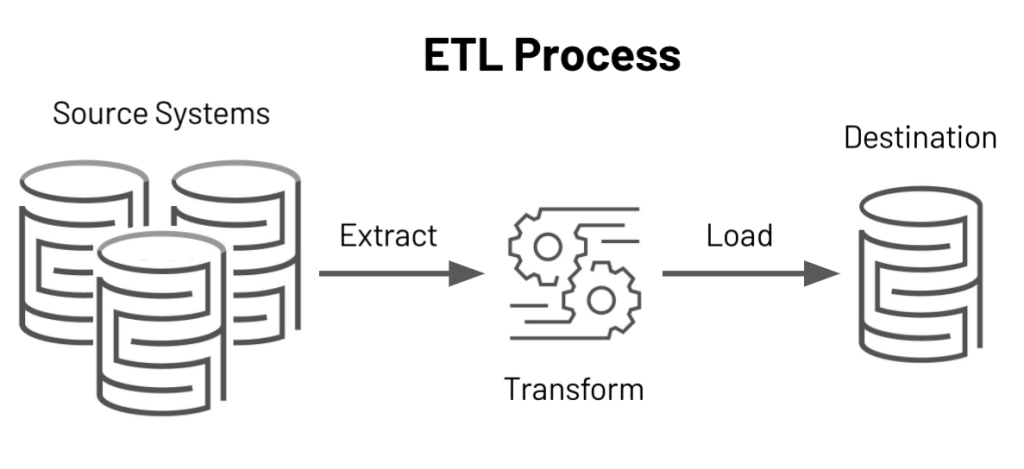

How Does ETL Work?

ETL works by following these steps:

- Extract: Data is extracted from various sources such as databases, flat files, APIs, and more. The extracted data is then transferred to a temporary storage area.

- Transform: The extracted data is then transformed, or cleaned, to ensure consistency and accuracy. This includes operations such as data mapping, data validation, data standardization, and data enrichment.

- Load: The transformed data is then loaded into the target database or data warehouse. The target database should be optimized for reporting and analysis, allowing for fast and efficient querying.

- Repeat: The ETL process is usually repeated on a schedule (e.g., daily, weekly, monthly) to keep the target database up-to-date with the latest data from the sources.

Overall, the ETL process is designed to provide a single version of the truth for reporting and analysis purposes, allowing organizations to make informed decisions based on accurate and consistent data.

ETL Tools

ETL tools are software applications used to perform the Extract, Transform, Load (ETL) process. Some popular ETL tools include:

Talend

Apache NiFi

Microsoft SSIS

Informatica PowerCenter

Oracle Data Integrator (ODI)

Google Cloud Dataflow

AWS Glue

Alteryx

Pentaho Data Integration

Hevo Data

Each ETL tool has its own set of features and capabilities, and the choice of tool will depend on the specific needs of the organization. Factors to consider when selecting an ETL tool include data sources, data volumes, performance requirements, and budget.

Why is ETL Important?

ETL is important because it helps organizations to integrate data from multiple sources into a single, consolidated view. This provides several benefits, including:

- Improved Data Quality: ETL helps to clean, standardize, and validate data, improving its overall quality.

- Better Data Insights: With data from multiple sources integrated into a single view, it’s easier to perform analysis and generate valuable insights.

- Increased Efficiency: ETL automates the process of integrating data, reducing the time and effort required to manually consolidate data from different sources.

- Enhanced Decision Making: By providing a single, accurate view of data, ETL enables organizations to make informed decisions based on the latest, most complete information.

- Better Data Management: ETL helps organizations to manage their data more effectively, reducing the risk of data duplication and improving the overall consistency of their data.

Overall, ETL is an important technology for organizations that need to integrate and make sense of data from multiple sources.

Benefits and Challenges of ETL (Extract, Transform, and Load)

Benefits of ETL (Extract, Transform, and Load):

Data Integration:

ETL helps organizations to integrate data from multiple sources into a centralized data repository, enabling organizations to make it easier to make decisions based on a complete picture of the data access data from a single source of truth.

Improved Data Quality:

ETL helps organizations to cleanse and validate data, which leads to improved data quality by removing inconsistencies, correcting errors, and filling in missing values which reduces errors.

Increased Efficiency:

ETL automates the data extraction and transformation processes and many manual data-related tasks, which saves time and increases efficiency resources for organizations.

Informed Decision-Making:

ETL helps organizations to transform raw data into meaningful information, which supports informed decision-making.

Consistent and Reliable Data:

ETL ensures that data is consistent and reliable by removing duplicates, correcting errors, and transforming the data into a standard format.

Improved Data Analytics:

ETL helps organizations to transform raw data into a format that is suitable for analysis, making it easier to gain insights from their data.

Challenges of ETL (Extract, Transform, and Load):

Complexity:

ETL can be a complex process, especially when dealing with large volumes of data.

Data Integration Challenges:

Integrating data from multiple sources can be challenging, as different systems may use different data formats and data structures.

Data Quality Issues:

Ensuring data quality can be challenging, as data may contain errors or inconsistencies that need to be addressed.

Performance Issues:

ETL processes can be resource-intensive and may impact system performance.

Security Concerns:

ETL processes involve sensitive data, which may require appropriate security measures to prevent unauthorized access or data breaches.

To overcome these challenges, organizations can implement best practices, such as using automated tools, monitoring the ETL process, and conducting regular data quality checks, to ensure that the data is accurate, consistent, and secure.

Scalability:

As the volume of data grows, the ETL process must be able to scale to accommodate this growth.

Despite these challenges, ETL remains an important process for organizations, as it helps to improve the quality of data and make informed decisions based on this data. To overcome the challenges of ETL, organizations can implement best practices, such as using automated ETL tools and monitoring the ETL process to ensure data accuracy and quality.

Conclusion:

In conclusion, ETL (Extract, Transform, Load) is a crucial process in data management that enables organizations to transform raw data into meaningful information. It helps organizations to integrate data from multiple sources, improve data quality, increase efficiency, and support informed decision-making. Despite its challenges, the benefits of ETL make it a valuable process for organizations.

To overcome these challenges, organizations can implement best practices, such as using automated tools and monitoring the ETL process, to ensure that the data is accurate, consistent, and secure.