DevOps Interview Questions

What are the requirements to become a DevOps Engineer?

When looking to fill out DevOps roles, organizations look for a clear set of skills. The most important of these are:

- Experience with infrastructure automation tools like Chef, Puppet, Ansible, SaltStack, or Windows PowerShell DSC.

- Fluency in web languages like Ruby, Python, PHP, or Java.

- Interpersonal skills that help you communicate and collaborate across teams and roles.

If you have the above skills, then you are ready to start preparing for your DevOps interview! If not, don’t worry – our Blog will help you master DevOps.

1. How is DevOps different from Agile / SDLC?

Agile is a set of values and principles about how to produce i.e. develop software.

For example: if you have some ideas and you want to turn those ideas into working software, you can use Agile values and principles as a way to do that. But, that software might only be working on a developer’s laptop or in a test environment. You want a way to quickly, easily, and repeatably move that software into production infrastructure, in a safe and simple way. To do that you need DevOps tools and Techniques.

You can summarize by saying Agile software development methodology focuses on the development of software but DevOps on the other hand is responsible for the development as well as deployment of the software in the safest and most reliable way possible. Here’s this blog that will give you more information on the evolution of DevOps.

2. What are the fundamental differences between DevOps & Agile?

The differences between the two are listed in the table below.

| Features | DevOps | Agile |

| Agility | Agility in both Development & Operations | Agility in only Development |

| Processes/ Practices | Involves processes such as CI, CD, CT, etc. | Involves practices such as Agile Scrum, Agile Kanban, etc. |

| Key Focus Area | Timeliness & quality have equal priority | Timeliness is the main priority |

| Release Cycles/ Development Sprints | Smaller release cycles with immediate feedback | Smaller release cycles |

| Source of Feedback | Feedback is from self (Monitoring tools) | Feedback is from customers |

| Scope of Work | Agility & need for Automation | Agility only |

3. What is the need for DevOps?

In general market trend. Instead of releasing big sets of features, companies are trying to see if small features can be transported to their customers through a series of release trains. This has many advantages like quick feedback from customers, the better quality of software, etc. which in turn leads to high customer satisfaction. To achieve this, companies are required to:

- Increase deployment frequency.

- The lower failure rate of new releases.

- Shortened lead time between fixes.

- Faster mean time to recovery in the event of a new release crashing

DevOps fulfills all these requirements and helps in achieving seamless software delivery. You can give examples of companies like Etsy, Google, and Amazon which have adopted DevOps to achieve levels of performance that were unthinkable even five years ago. They are doing tens, hundreds, or even thousands of code deployments per day while delivering world-class stability, reliability, and security.

4. Which are the top DevOps tools? Which tools have you worked on?

The most popular DevOps tools are mentioned below:

- Git: Version control System tool

- Jenkins: Continuous Integration tool

- Selenium: Continuous Testing tool

- Puppet, Chef, Ansible: Configuration Management and Deployment tools

- Nagios: Continuous Monitoring tool

- Docker: Containerization tool

You can also mention any other tool if you want, but make sure you include the above tools in your answer.

The second part of the answer has two possibilities:

- If you have experience with all the above tools, then you can say that I have worked on all these tools for developing good quality software and deploying that software easily, frequently, and reliably.

- If you have experience only with some of the above tools then mention those tools and say that I have specialization in these tools and have an overview of the rest of the tools.

5. How do all these tools work together?

Given below is a generic logical flow where everything gets automated for seamless delivery. However, this flow may vary from organization to organization as per the requirement.

- Developers develop the code and this source code is managed by Version Control System tools like Git etc.

- Developers send this code to the Git repository and any changes made in the code are committed to this Repository.

- Jenkins pulls this code from the repository using the Git plugin and builds it using tools like Ant or Maven.

- Configuration management tools like puppet deploys & provisions testing environment and then Jenkins releases this code on the test environment on which testing is done using tools like selenium.

- Once the code is tested, Jenkins sends it for deployment on the production server (even the production server is provisioned & maintained by tools like a puppet).

- After deployment, It is continuously monitored by tools like Nagios.

- Docker containers provide a testing environment to test the build features.

6. What are the advantages of DevOps?

Technical benefits:

- Continuous software delivery

- Less complex problems to fix

- Faster resolution of problems

Business benefits:

- Faster delivery of features

- More stable operating environments

- More time is available to add value (rather than fix/maintain).

7. Mention some of the core benefits of DevOps?

- Faster development of software and quick deliveries.

- DevOps methodology is flexible and adaptable to changes easily.

- Compared to the previous software development models, confusion about the project is decreased due to increased product quality.

- The gap between the development team and the operation team is bridged. i.e, the communication between the teams has been increased.

- Efficiency is increased by the addition of automation of continuous integration and continuous deployment.

- Customer satisfaction is enhanced.

8. What is the most important thing DevOps helps us achieve?

According to me, the most important thing that DevOps helps us achieve is to get the changes into production as quickly as possible while minimizing risks in software quality assurance and compliance. This is the primary objective of DevOps. However, you can add many other positive effects of DevOps.

For example, clearer communication and better working relationships between teams i.e. both the Ops team and Dev team collaborate together to deliver good quality software which in turn leads to higher customer satisfaction.

9. Explain with a use case where DevOps can be used in industry/real life.

There are many industries that are using DevOps so you can mention any of those use cases, you can also refer to the below example: Etsy is a peer-to-peer e-commerce website focused on handmade or vintage items and supplies, as well as unique factory-manufactured items. Etsy struggled with slow, painful site updates that frequently caused the site to go down. It affected sales for millions of Etsy’s users who sold goods through the online marketplace and risked driving them to the competitor.

With the help of a new technical management team, Etsy transitioned from its waterfall model, which produced four-hour full-site deployments twice weekly, to a more agile approach. Today, it has a fully automated deployment pipeline, and its continuous delivery practices have reportedly resulted in more than 50 deployments a day with fewer disruptions.

10. Explain your understanding and expertise on both the software development side and the technical operations side of an organization you have worked with in the past.

For this answer, share your past experience and try to explain how flexible you were in your previous job. You can refer to the below example :

DevOps engineers almost always work in a 24/7 business-critical online environment. I was adaptable to on-call duties and was available to take up real-time, live-system responsibility. I successfully automated processes to support continuous software deployments. I have experience with public/private clouds, tools like Chef or Puppet, scripting and automation with tools like Python and PHP, and a background in Agile.

11. What are the anti-patterns of DevOps?

A pattern is a common usage usually followed. If a pattern commonly adopted by others does not work for your organization and you continue to blindly follow it, you are essentially adopting an anti-pattern. There are myths about DevOps. Some of them include:

- DevOps is a process

- Agile equals DevOps?

- We need a separate DevOps group

- DevOps will solve all our problems

- DevOps means Developers Managing Production

- DevOps is Development-driven release management

- DevOps is not development driven.

- DevOps is not IT Operations driven.

- We can’t do DevOps – We’re Unique

- We can’t do DevOps – We’ve got the wrong people.

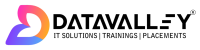

12. Explain the different phases in DevOps methodology?

The various phases of the DevOps lifecycle are as follows:

- Plan – In this stage, all the requirements of the project and everything regarding the project like time for each stage, cost, etc are discussed. This will help everyone on the team to get a brief idea about the project.

- Code – The code is written over here according to the client’s requirements. Here codes are written in the form of small codes called units.

- Build – The building of the units is done in this step.

- Test – Testing is done in this stage and if there are mistakes found it is returned for re-build.

- Integrate – All the units of the codes are integrated into this step.

- Deploy – codeDevOpsNow is deployed in this step on the client’s environment.

- Operate – Operations are performed on the code if required.

- Monitor – Monitoring of the application is done over here in the client’s environment.

13. Explain your understanding and expertise on both the software development side and the technical operations side of an organization you have worked with in the past.

- Deployment frequency: This measures how frequently a new feature is deployed.

- Change failure rate: This is used to measure the number of failures in deployment.

- Mean Time to Recovery (MTTR): The time taken to recover from a failed deployment.

14. What are the KPIs that are used for gauging the success of a DevOps team?

KPI Means Key Performance Indicators are used to measure the performance of a DevOps team, identify mistakes and rectify them. This helps the DevOps team to increase productivity and which directly impacts revenue.

There are many KPIs that one can track in a DevOps team. The following are some of them:

- Change Failure rates: This is used to measure the number of failures in deployments.

- Meantime to recovery (MTTR): The time taken to recover from a failed deployment.

- Lead time: This helps to measure the time taken to deploy on the production environment.

- Deployment frequency: This measures how frequently a new feature is deployed.

- Change volume: This is used to measure how much code is changed from the existing code.

- Cycle time: This is used to measure total application development time.

- Customer Ticket: This helps us to measure the number of errors detected by the end-user.

- Availability: This is used to determine the downtime of the application.

- Defect escape rate: This helps us to measure the number of issues that are needed to be detected as early as possible.

- Time of detection: This helps you understand whether your response time and application monitoring processes are functioning correctly.

15. Why has DevOps become famous?

As we know before DevOps there are two other software development models:

- Waterfall model

- Agile model

In the waterfall model, we have limitations of one-way working and a lack of communication with customers. This was overcome in Agile by including the communication between the customer and the company by taking feedback. But in this model, another issue is faced regarding communication between the Development team and operations team due to which there is a delay in the speed of production. This is where DevOps is introduced.

It bridges the gap between the development team and the operation team by including the automation feature. Due to this, the speed of production is increased. By including automation, testing is integrated into the development stage. This resulted in finding the bugs at the very initial stage which increased the speed and efficiency.

16. How does AWS contribute to DevOps?

AWS [Amazon Web Services ] is one of the famous cloud providers. In AWS DevOps is provided with some benefits:

- Flexible Resources: AWS provides all the DevOps resources which are flexible to use.

- Scaling: we can create several instances on AWS with a lot of storage and computation power.

- Automation: Automation is provided by AWS like CI/CD

- Security: AWS provides security when we create an instance like IAM

Version Control System (VCS) Interview Questions

17. What is Version control?

Version Control is a system that records changes to a file or set of files over time so that you can recall specific versions later. Version control systems consist of a central shared repository where teammates can commit changes to a file or set of files. Then you can mention the uses of version control.

Version control allows you to:

- Revert files back to their previous state.

- Revert the entire project back to its previous state.

- Compare changes over time.

- See who last modified something that might be causing a problem.

- Who introduced an issue and when.

18. What are the benefits of using version control?

I will suggest you include the following advantages of version control:

- With Version Control System (VCS), all the team members are allowed to work freely on any file at any time. VCS will later allow you to merge all the changes into a common version.

- All the past versions and variants are neatly packed up inside the VCS. When you need it, you can request any version at any time and you’ll have a snapshot of the complete project right at hand.

- Every time you save a new version of your project, your VCS requires you to provide a short description of what was changed. Additionally, you can see what exactly was changed in the file’s content. This allows you to know who has made what change in the project.

- A distributed VCS like Git allows all the team members to have the complete history of the project so if there is a breakdown in the central server you can use any of your teammate’s local Git repositories.

19. Describe branching strategies you have used?

This question is asked to test your branching experience so tell them about how you have used branching in your previous job and what purpose it serves, you can refer to the below points:

- Feature branching

- A feature branch model keeps all of the changes for a particular feature inside of a branch. When the feature is fully tested and validated by automated tests, the branch is then merged into the master.

- Task branching

- In this model, each task is implemented on its own branch with the task key included in the branch name. It is easy to see which code implements which task, just look for the task key in the branch name.

- Release branching

- Once the develop branch has acquired enough features for a release, you can clone that branch to form a Release branch. Creating this branch starts the next release cycle, so no new features can be added after this point, only bug fixes, documentation generation, and other release-oriented tasks should go into this branch. Once it is ready to ship, the release gets merged into the master and tagged with a version number. In addition, it should be merged back into develop branch, which may have progressed since the release was initiated.

In the end, tell them that branching strategies vary from one organization to another, so I know basic branching operations like delete, merge, checking out a branch, etc.

20. Which VCS tool you are comfortable with?

You can just mention the VCS tool that you have worked on like this: “I have worked on Git and one major advantage it has over other VCS tools like SVN is that it is a distributed version control system.”

Distributed VCS tools do not necessarily rely on a central server to store all the versions of a project’s files. Instead, every developer “clones” a copy of a repository and has the full history of the project on their own hard drive.

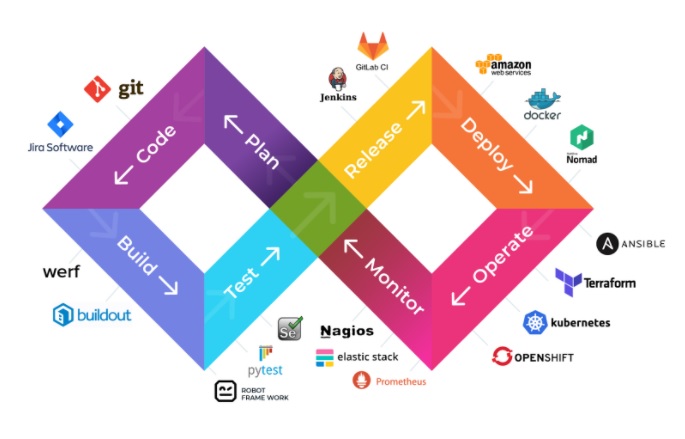

21. What is Git?

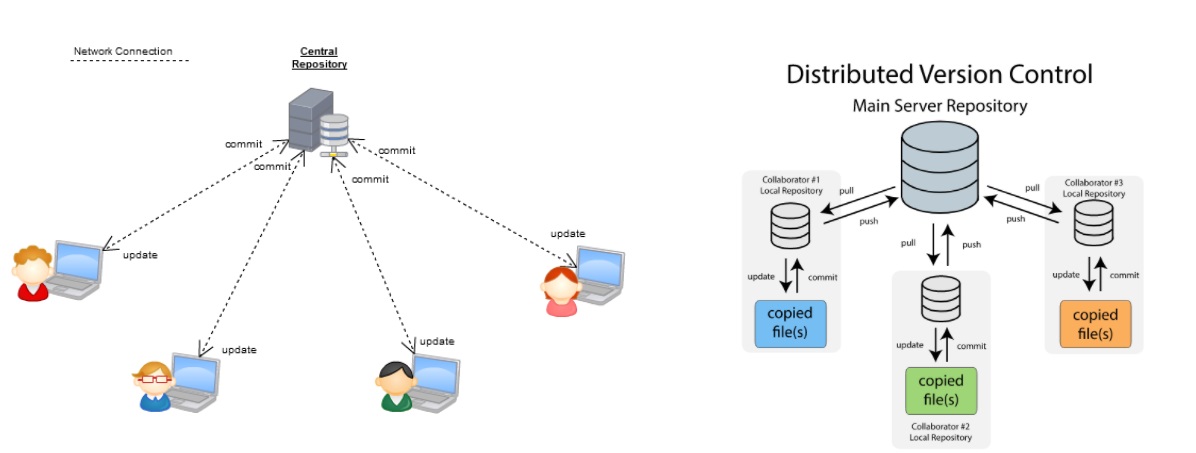

I will suggest that you attempt this question by first explaining the architecture of git as shown in the below diagram. You can refer to the explanation given below:

- Git is a Distributed Version Control system (DVCS). It can track changes to a file and allows you to revert back to any particular change.

- Its distributed architecture provides many advantages over other Version Control Systems (VCS) like SVN one major advantage is that it does not rely on a central server to store all the versions of a project’s files. Instead, every developer “clones” a copy of a repository I have shown in the diagram below with “Local repository” and has the full history of the project on his hard drive so that when there is a server outage, all you need for recovery is one of your teammate’s local Git repository.

- There is a central cloud repository as well where developers can commit changes and share them with other teammates as you can see in the diagram where all collaborators are committing changes “Remote repository”.

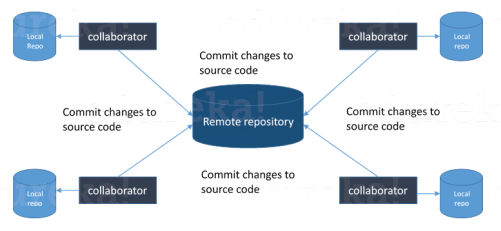

22. Explain some basic Git commands?

Below are some basic Git commands:

23. In Git how do you revert a commit that has already been pushed and made public?

There can be two answers to this question so make sure that you include both because any of the below options can be used depending on the situation:

- Remove or fix the bad file in a new commit and push it to the remote repository. This is the most natural way to fix an error. Once you have made the necessary changes to the file, commit it to the remote repository for that I will use

- git commit -m “commit message”

Create a new commit that undoes all changes that were made in the bad commit to do this I will use a command

- git revert <name of bad commit>

24. How do you squash the last N commits into a single commit?

There are two options to squash the last N commits into a single commit. Include both of the below-mentioned options in your answer:

- If you want to write the new commit message from scratch use the following command

- git reset –soft HEAD~N &&

- git commit

- If you want to start editing the new commit message with a concatenation of the existing commit messages then you need to extract those messages and pass them to Git commit for that I will use

- git reset –soft HEAD~N &&

- git commit –edit -m”$(git log –format=%B –reverse .HEAD@{N})”

25. What is Git bisect? How can you use it to determine the source of a (regression) bug?

I will suggest you first give a small definition of Git bisect, Git bisect is used to find the commit that introduced a bug by using binary search. The command for Git bisect is

git bisect <subcommand> <options>

Now since you have mentioned the command above, explain what this command will do, This command uses a binary search algorithm to find which commit in your project’s history introduced a bug. You use it by first telling it a “bad” commit that is known to contain the bug, and a “good” commit that is known to be before the bug was introduced. Then Git bisect picks a commit between those two endpoints and asks you whether the selected commit is “good” or “bad”. It continues narrowing down the range until it finds the exact commit that introduced the change.

26. What is Git rebase and how can it be used to resolve conflicts in a feature branch before the merge?

According to me, you should start by saying git rebase is a command which will merge another branch into the branch where you are currently working, and move all of the local commits that are ahead of the rebased branch to the top of the history on that branch.

Now once you have defined Git rebase time for an example to show how it can be used to resolve conflicts in a feature branch before the merge, if a feature branch was created from the master, and since then the master branch has received new commits, Git rebases can be used to move the feature branch to the tip of master.

The command effectively will replay the changes made in the feature branch at the tip of the master, allowing conflicts to be resolved in the process. When done with care, this will allow the feature branch to be merged into the master with relative ease and sometimes as a simple fast-forward operation.

27. How do you configure a Git repository to run code sanity-checking tools right before making commits, and preventing them if the test fails?

I will suggest you first give a small introduction to sanity checking, A sanity or smoke test determines whether it is possible and reasonable to continue testing.

Now explain how to achieve this, this can be done with a simple script related to the pre-commit hook of the repository. The pre-commit hook is triggered right before a commit is made, even before you are required to enter a commit message. In this script, one can run other tools, such as lines, and perform sanity checks on the changes being committed to the repository.

Finally, give an example, you can refer to the below script:

| 123456789101112 | #!/bin/shfiles=$(git diff --cached --name-only --diff-filter=ACM | grep '.go$')if [ -z files ]; thenexit 0fiunfmtd=$(gofmt -l $files)if [ -z unfmtd ]; thenexit 0fiecho “Some .go files are not fmt’d”exit 1</p><p style="text-align: justify;"><span> |

This script checks to see if any .go file that is about to be committed needs to be passed through the standard Go source code formatting tool gofmt. By exiting with a non-zero status, the script effectively prevents the commit from being applied to the repository.

28. How do you find a list of files that have changed in a particular commit?

For this answer instead of just telling the command, explain what exactly this command will do so you can say that, To get a list file that has changed in a particular commit use the command

git diff-tree -r {hash}

Given the commit hash, this will list all the files that were changed or added in that commit. The -r flag makes the command list individual files, rather than collapsing them into root directory names only.

You can also include the below-mentioned point although it is totally optional but will help in impressing the interviewer.

The output will also include some extra information, which can be easily suppressed by including two flags:

git diff-tree –no-commit-id –name-only -r {hash}

Here –no-commit-id will suppress the commit hashes from appearing in the output, and –name-only will only print the file names, instead of their paths.

29. How do you set up a script to run every time a repository receives new commits through push?

There are three ways to configure a script to run every time a repository receives new commits through push, one needs to define either a pre-receive, update or post-receive hook depending on when exactly the script needs to be triggered.

- Pre-receive hook in the destination repository is invoked when commits are pushed to it. Any script bound to this hook will be executed before any references are updated. This is a useful hook to run scripts that help enforce development policies.

- The update hook works in a similar manner to the pre-receive hook and is also triggered before any updates are actually made. However, the update hook is called once for every commit that has been pushed to the destination repository.

- Finally, the post-receive hook in the repository is invoked after the updates have been accepted into the destination repository. This is an ideal place to configure simple deployment scripts, invoke some continuous integration systems, dispatch notification emails to repository maintainers, etc.

Hooks are local to every Git repository and are not versioned. Scripts can either be created within the hooks directory inside the “.git” directory, or they can be created elsewhere and links to those scripts can be placed within the directory.

30. How will you know in Git if a branch has already been merged into the master?

I will suggest you include both the below-mentioned commands:

git branch –merged lists the branches that have been merged into the current branch.

git branch –no-merged lists the branches that have not been merged.

31. Explain the difference between a centralized and distributed version control system (VCS).?

| Centralized Version Control | Distributed Version control |

| In this, we will not be having a copy of the main repository on the local repository. | In this, all the developers will be having a copy of the main repository on their local repository |

| There is a need for the internet for accessing the main repository data because we will not be having another copy of the server on the local repository | There is no need for the internet for accessing the main repository data because we will be having another copy of the server on the local repository. |

| If the main server crashes then there will be a problem in accessing the server for the developers. | If there is a crash on the main server there will be no problem faced regarding the availability of the server. |

32. What is the difference between Git Merge and Git Rebase?

Here, both are merging mechanisms but the difference between Git Merge and Git Rebase is, in Git Merge logs will be showing the complete history of commits.

However, when one does Git Rebase, the logs are rearranged. The rearrangement is done to make the logs look linear and simple to understand. This is also a drawback since other team members will not understand how the different commits were merged into one another.

33. Explain the difference between git fetch and git pull?

| Git Pull | Git Fetch |

| DevOps is used to update the working directory with the latest changes from the remote server. | Git fetch gets new data from a remote repository to the local repository |

| Git pull is used to get the data to the local repository and the data is merged into the working repository | Git fetch is only used to get the data to the local repository but the data is not merged in the working repository |

| Command – git pull origin | Command – git fetch origin |

34. Can you explain the “Shift left to reduce failure” concept in DevOps?

Shift left is a concept used in DevOps for a better level of security, performance, etc. Let us get into detail with an example, if we see all the phases in DevOps we can say that security is tested before the step of deployment. By using the left shift method we can include the security in the development phase which is on the left.[will be shown in the diagram] not only in development we can integrate with all phases like before development and in the testing phase too. This probably increases the level of security by finding the errors in the very initial stages.

Continuous Integration Interview Questions

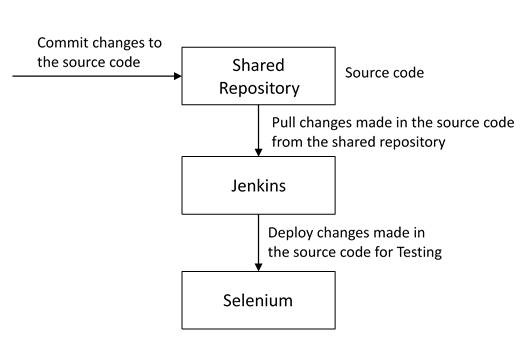

35. What is meant by Continuous Integration?

Continuous Integration (CI) is a development practice that requires developers to integrate code into a shared repository several times a day. Each check-in is then verified by an automated build, allowing teams to detect problems early.

I suggest that you explain how you implemented it in your previous job. You can refer to the below given shown above:

- Developers check out code in their private workspaces.

- When they are done with it they commit the changes to the shared repository (Version Control Repository).

- The CI server monitors the repository and checks out changes when they occur.

- The CI server then pulls these changes and builds the system and also runs unit and integration tests.

- The CI server will now inform the team of the successful build.

- If the build or test fails, the CI server will alert the team.

- The team will try to fix the issue at the earliest opportunity.

- This process keeps on repeating.

36. Why do you need a Continuous Integration of Dev & Testing?

You should focus on the need for Continuous Integration. The Continuous Integration of Dev and Testing improves the quality of software and reduces the time taken to deliver it, by replacing the traditional practice of testing after completing all development. It allows the Dev team to easily detect and locate problems early because developers need to integrate code into a shared repository several times a day (more frequently). Each check-in is then automatically tested.

37. What are the success factors for Continuous Integration?

- Maintain a code repository

- Automate the build

- Make the build self-testing

- Everyone commits to the baseline every day

- Every commit (to baseline) should be built

- Keep the build fast

- Test in a clone of the production environment

- Make it easy to get the latest deliverables

- Everyone can see the results of the latest build

- Automate deployment.

38. Explain how you can move or copy Jenkins from one server to another?

I will approach this task by copying the jobs directory from the old server to the new one. There are multiple ways to do that; I have mentioned them below:

You can:

- Move a job from one installation of Jenkins to another by simply copying the corresponding job directory.

- Make a copy of an existing job by making a clone of a job directory by a different name.

- Rename an existing job by renaming a directory. Note that if you change a job name you will need to change any other job that tries to call the renamed job.

39. Explain how can create a backup and copy files in Jenkins?

The answer to this question is really direct. To create a backup, all you need to do is periodically back up your JENKINS_HOME directory. This contains all of your build jobs configurations, your slave node configurations, and your build history. To create a backup of your Jenkins setup, just copy this directory. You can also copy a job directory to clone or replicate a job or rename the directory.

40. Explain how you can set up a Jenkins job?

My approach to this answer will be to first mention how to create a Jenkins job. Go to Jenkins’s top page, select “New Job”, then choose “Build a free-style software project”.

Then you can tell the elements of this freestyle job:

- Optional SCM, such as CVS or Subversion where your source code resides.

- Optional triggers to control when Jenkins will perform builds.

- Some sort of build script that performs the build (ant, maven, shell script, batch file, etc.) where the real work happens.

- Optional steps to collect information out of the build, such as archiving the artifacts and/or recording Javadoc and test results.

- Optional steps to notify other people/systems of the build result, such as sending e-mails, IMs, updating the issue tracker, etc.

41. Mention some of the useful plugins in Jenkins?

Below, I have mentioned some important Plugins:

- Maven 2 project

- Amazon EC2

- HTML publisher

- Copy artifact

- Join

- Green Balls

These Plugins, I feel are the most useful plugins. If you want to include any other Plugin that is not mentioned above, you can add them as well. But, make sure you first mention the above-stated plugins and then add your own.

42. How will you secure Jenkins?

The way I secure Jenkins is mentioned below. If you have any other way of doing it, please mention it in the comments section below:

- Ensure global security is on.

- Ensure that Jenkins is integrated with my company’s user directory with the appropriate plugin.

- Ensure that matrix/Project matrix is enabled to fine-tune access.

- Automate the process of setting rights/privileges in Jenkins with a custom version-controlled script.

- Limit physical access to Jenkins data/folders.

- Periodically run security audits on the same.

Jenkins is one of the many popular tools that are used extensively in DevOps.

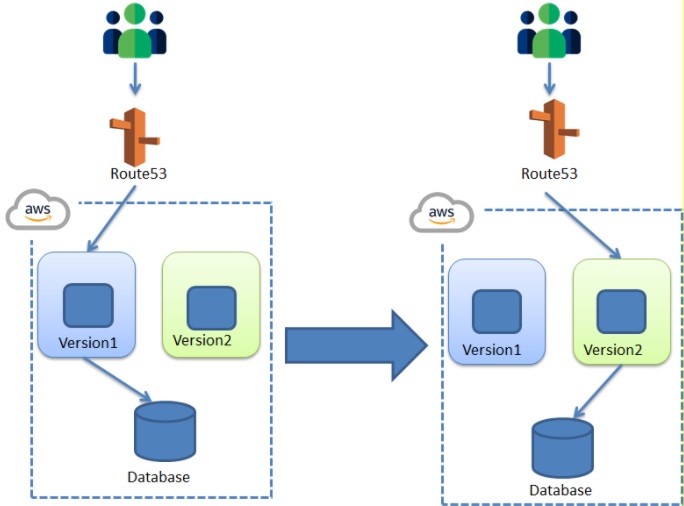

43. What is the Blue/Green Deployment Pattern?

This is a continuous deployment strategy that is generally used to decrease downtime. This is used for transferring traffic from one instance to another.

For Example, let us take a situation where we want to include a new version of code. Now we have to replace the old version with a new version of the code. The old version is considered to be in a blue environment and the new version is considered as a green environment. we had made some changes to the existing old version which transformed into a new version with minimum changes.

Now to run the new version of the instance we need to transfer the traffic from the old instance to the new instance. That means we need to transfer the traffic from the blue environment to the green environment. The new version will be running on the green instance. Gradually the traffic is transferred to the green instance. The blue instance will be kept on idle and used for the rollback.

In Blue-Green deployment, the application is not deployed in the same environment. Instead, a new server or environment is created where the new version of the application is deployed. Once the new version of the application is deployed in a separate environment, the traffic to the old version of the application is redirected to the new version of the application.

We follow the Blue-Green Deployment model so that any problem which is encountered in the production environment for the new application is detected. The traffic can be immediately redirected to the previous Blue environment, with minimum or no impact on the business. The following diagram shows, Blue-Green Deployment.

44. Explain how you can set up a Jenkins job?

A pattern can be defined as an ideology on how to solve a problem. Now anti-pattern can be defined as a method that would help us to solve the problem now but it may result in damaging our system [i.e, it shows how not to approach a problem ].

Some of the anti-patterns we see in DevOps are:

- DevOps is a process and not a culture.

- DevOps is nothing but Agile.

- There should be a separate DevOps group.

- DevOps solves every problem.

- DevOps equates to developers running a production environment.

- DevOps follows Development-driven management

- DevOps does not focus much on development.

- As we are a unique organization, we don’t follow the masses and hence we won’t implement DevOps.

- We don’t have the right set of people, hence we cant implement DevOps culture.

45. How will you approach a project that needs to implement DevOps?

First, if we want to approach a project that needs DevOps, we need to know a few concepts:

- Any programming language [C, C++, JAVA, Python..] concerning the project.

- Get an idea of operating systems for management purposes [like memory management, disk management..etc].

- Get an idea about networking and security concepts.

- Get an idea about what DevOps is, what is continuous integration, continuous development, continuous delivery, continuous deployment, monitoring, and its tools used in various phases.[like GIT, Docker, Jenkins,…etc]

- Now after this interact with other teams and design a roadmap for the process.

- Once all teams get cleared then create a proof of concept and start according to the project plan.

- Now the project is ready to go through the phases of DevOps.Version control, integration, testing, deployment, delivery, and monitoring.

Continuous Testing Interview Questions

46. What is Continuous Testing?

Continuous Testing is the process of executing automated tests as part of the software delivery pipeline to obtain immediate feedback on the business risks associated with the latest build. In this way, each build is tested continuously, allowing Development teams to get fast feedback so that they can prevent those problems from progressing to the next stage of the Software delivery life-cycle. This dramatically speeds up a developer’s workflow as there’s no need to manually rebuild the project and re-run all tests after making changes.

47. What is Automation Testing?

Automation testing or Test Automation is a process of automating the manual process to test the application/system under test. Automation testing involves the use of separate testing tools which lets you create test scripts that can be executed repeatedly and don’t require any manual intervention.

48. What are the benefits of Automation Testing?

I have listed down some advantages of automation testing. Include these in your answer and you can add your own experience of how Continuous Testing helped your previous company:

- Supports execution of repeated test cases

- Aids in testing a large test matrix

- Enables parallel execution

- Encourages unattended execution

- Improves accuracy thereby reducing human-generated errors

- Saves time and money

49. How to automate Testing in the DevOps lifecycle?

I have mentioned a generic flow below which you can refer to:

In DevOps, developers are required to commit all the changes made in the source code to a shared repository. Continuous Integration tools like Jenkins will pull the code from this shared repository every time a change is made in the code and deploy it for Continuous Testing which is done by tools like Selenium as shown in the below diagram.

In this way, any change in the code is continuously tested, unlike the traditional approach.

50. Why is Continuous Testing important for DevOps?

You can answer this question by saying, “Continuous Testing allows any change made in the code to be tested immediately. This avoids the problems created by having “big-bang” testing left until the end of the cycle such as release delays and quality issues. In this way, Continuous Testing facilitates more frequent and good quality releases.”

51. What are the key elements of Continuous Testing tools?

Key elements of Continuous Testing are:

- Risk Assessment: It Covers risk mitigation tasks, technical debt, quality assessment, and test coverage optimization to ensure the build is ready to progress toward the next stage.

- Policy Analysis: It ensures all processes align with the organization’s evolving business and compliance demands are met.

- Requirements Traceability: It ensures true requirements are met and rework is not required. An object assessment is used to identify which requirements are at risk, working as expected, or requiring further validation.

- Advanced Analysis: It uses automation in areas such as static code analysis, changes impact analysis, and scope assessment/prioritization to prevent defects in the first place and accomplish more within each iteration.

- Test Optimization: It ensures tests yield accurate outcomes and provide actionable findings. Aspects include Test Data Management, Test Optimization Management, and Test Maintenance

- Service Virtualization: It ensures access to real-world testing environments. Service visualization enables access to the virtual form of the required testing stages, cutting the waste of time to test environment setup and availability.

52. Which Testing tool are you comfortable with and what are the benefits of that tool?

Here mention the testing tool that you have worked with and accordingly frame your answer. I have mentioned an example below:

I have worked on Selenium to ensure high-quality and more frequent releases.

Some advantages of Selenium are:

- It is a free and open source

- It has a large user base and helps communities

- It has cross Browser compatibility (Firefox, Chrome, Internet Explorer, Safari, etc.)

- It has great platform compatibility (Windows, Mac OS, Linux, etc.)

- It supports multiple programming languages (Java, C#, Ruby, Python, Pearl, etc.)

- It has fresh and regular repository developments

- It supports distributed testing.

53. What are the Testing types supported by Selenium?

Selenium supports two types of testing:

Regression Testing: It is the act of retesting a product around an area where a bug was fixed.

Functional Testing: It refers to the testing of software features (functional points) individually.

54. What is Selenium IDE?

Selenium IDE is an integrated development environment for Selenium scripts. It is implemented as a Firefox extension, and allows you to record, edit, and debug tests. Selenium IDE includes the entire Selenium Core, allowing you to easily and quickly record and play back tests in the actual environment that they will run in.

Now include some advantages in your answer. With autocomplete support and the ability to move commands around quickly, Selenium IDE is the ideal environment for creating Selenium tests no matter what style of tests you prefer.

55. What is the difference between Assert and Verify commands in Selenium?

I have mentioned the differences between Assert and Verify commands below:

- The assert command checks whether the given condition is true or false. Let’s say we assert whether the given element is present on the web page or not. If the condition is true, then the program control will execute the next test step. But, if the condition is false, the execution would stop and no further test would be executed.

- Verify command also checks whether the given condition is true or false. Irrespective of the condition being true or false, the program execution doesn’t halt i.e. any failure during verification would not stop the execution and all the test steps would be executed.

56. How to launch Browser using WebDriver?

The following syntax can be used to launch Browser:

WebDriver driver = new FirefoxDriver();

WebDriver driver = new ChromeDriver();

WebDriver driver = new InternetExplorerDriver();

57. When should I use Selenium Grid?

Selenium Grid can be used to execute the same or different test scripts on multiple platforms and browsers concurrently to achieve distributed test execution. This allows testing under different environments and saves execution time remarkably.

Learn Automation testing and other DevOps concepts.

58. Can you differentiate between continuous testing and automation testing?

| Continuous Testing | Automation Testing |

| Continuous Testing is a process that involves executing all the automated test cases as a part of the software delivery pipeline | Automation testing is a process tool that involves testing the code repetitively without manual intervention. |

| This process mainly focuses on business risks. | This process mainly focuses on a bug-free environment. |

| It’s comparatively slow than automation testing | It’s comparatively faster than continuous testing. |

Configuration Management Interview Questions

58. What are the goals of Configuration management processes?

The purpose of Configuration Management (CM) is to ensure the integrity of a product or system throughout its life-cycle by making the development or deployment process controllable and repeatable, therefore creating a higher quality product or system. The CM process allows orderly management of system information and system changes for purposes such as:

- Revise capability,

- Improve performance,

- Reliability or maintainability,

- Extend life,

- Reduce cost,

- Reduce risk and

- Liability, or correct defects.

60. What is the difference between Asset Management and Configuration Management?

Given below are a few differences between Asset Management and Configuration Management:

61. What is the difference between an Asset and a Configuration Item?

According to me, you should first explain the Asset. It has a financial value along with a depreciation rate attached to it. IT assets are just a subset of it. Anything and everything that has a cost and the organization uses it for its asset value calculation and related benefits in tax calculation fall under Asset Management, and such an item is called an asset.

Configuration Items on the other hand may or may not have financial values assigned to them. It will not have any depreciation linked to it. Thus, its life would not be dependent on its financial value but will depend on the time till that item becomes obsolete for the organization.

Now you can give an example that can showcase the similarity and differences between both:

1) Similarity:

Server – It is both an asset as well as a CI.

2) Difference:

Building – It is an asset but not a CI.

Document – It is a CI but not an asset

62. What do you understand by “Infrastructure as code”? How does it fit into the DevOps methodology? What purpose does it achieve?

Infrastructure as Code (IAC) is a type of IT infrastructure that operations teams can use to automatically manage and provision through code, rather than using a manual process.

Companies for faster deployments treat infrastructure like software: as code that can be managed with DevOps tools and processes. These tools let you make infrastructure changes more easily, rapidly, safely, and reliably.

63. Which among Puppet, Chef, SaltStack, and Ansible is the best Configuration Management (CM) tool? Why?

This depends on the organization’s need to mention a few points on all those tools:

Puppet is the oldest and most mature CM tool. Puppet is a Ruby-based Configuration Management tool, but while it has some free features, much of what makes Puppet great is only available in the paid version. Organizations that don’t need a lot of extras will find Puppet useful, but those needing more customization will probably need to upgrade to the paid version.

Chef is written in Ruby, so it can be customized by those who know the language. It also includes free features, plus it can be upgraded from open source to enterprise-level if necessary. On top of that, it’s a very flexible product.

Ansible is a very secure option since it uses Secure Shell. It’s a simple tool to use, but it does offer a number of other services in addition to configuration management. It’s very easy to learn, so it’s perfect for those who don’t have a dedicated IT staff but still need a configuration management tool.

SaltStack is a python-based open-source CM tool made for larger businesses, but its learning curve is fairly low.

64. What is a Puppet?

Puppet is a Configuration Management tool that is used to automate administration tasks.

Now you should describe its architecture and how Puppet manages its Agents. Puppet has a Master-Slave architecture in which the Slave has to first send a Certificate signing request to the Master and the Master has to sign that Certificate in order to establish a secure connection between Puppet Master and Puppet Slave as shown in the diagram below. Puppet Slave sends a request to Puppet Master and Puppet Master then pushes configuration on Slave.

65. Before a client can authenticate with the Puppet Master, its certs need to be signed and accepted. How will you automate this task?

The easiest way is to enable auto-signing in puppet.conf

Do mention that this is a security risk. If you still want to do this:

- Firewall your puppet master – restrict port tcp/8140 to only networks that you trust.

- Create puppet masters for each ‘trust zone’, and only include the trusted nodes in that Puppet masters manifest.

- Never use a full wildcard such as *.

66. Describe the most significant gain you made from automating a process through Puppet.

For this answer, I will suggest you explain your past experience with Puppet. you can refer to the below example:

I automated the configuration and deployment of Linux and Windows machines using Puppet. In addition to shortening the processing time from one week to 10 minutes, I used the roles and profiles pattern and documented the purpose of each module in README to ensure that others could update the module using Git.

67. Which open-source or community tools do you use to make Puppet more powerful?

Over here, you need to mention the tools and how you have used those tools to make Puppet more powerful. Below is one example for your reference:

Changes and requests are ticketed through Jira and we manage requests through an internal process. Then, we use Git and Puppet’s Code Manager app to manage Puppet code in accordance with best practices. Additionally, we run all of our Puppet changes through our continuous integration pipeline in Jenkins using the beaker testing framework.

68. What are Puppet Manifests?

It is a very important question so make sure you go in the correct flow. According to me, you should first define Manifests. Every node (or Puppet Agent) has got its configuration details in Puppet Master, written in the native Puppet language. These details are written in the language which Puppet can understand and are termed Manifests. They are composed of Puppet code and their filenames use the .pp extension.

Now give an example. You can write a manifest in Puppet Master that creates a file and installs apache on all Puppet Agents (Slaves) connected to the Puppet Master.

69. What is Puppet Module and How it is different from Puppet Manifest?

A Puppet Module is a collection of Manifests and data (such as facts, files, and templates), and they have a specific directory structure. Modules are useful for organizing your Puppet code because they allow you to split your code into multiple Manifests. It is considered best practice to use Modules to organize almost all of your Puppet Manifests.

Puppet programs are called Manifests which are composed of Puppet code and their file names use the .pp extension.

70. What is Facter in Puppet?

You are expected to answer what exactly Facter does in Puppet so according to me, you should say, “Facter gathers basic information (facts) about Puppet Agent such as hardware details, network settings, OS type and version, IP addresses, MAC addresses, SSH keys, and more. These facts are then made available in Puppet Master’s Manifests as variables.”

71. What is a Chef?

Chef is a powerful automation platform that transforms infrastructure into code. A chef is a tool for which you write scripts that are used to automate processes. What processes? Pretty much anything related to IT.

Now you can explain the architecture of Chef, it consists of:

- Chef Server: The Chef Server is the central store of your infrastructure’s configuration data. The Chef Server stores the data necessary to configure your nodes and provides search, a powerful tool that allows you to dynamically drive node configuration based on data.

- Chef Node: A Node is any host that is configured using Chef-client. Chef-client runs on your nodes, contacting the Chef Server for the information necessary to configure the node. Since a Node is a machine that runs the Chef-client software, nodes are sometimes referred to as “clients”.

- Chef Workstation: A Chef Workstation is a host you use to modify your cookbooks and other configuration data.

72. What is a resource in Chef?

A Resource represents a piece of infrastructure and its desired state, such as a package that should be installed, a service that should be running, or a file that should be generated.

You should explain the functions of Resources that include the following points:

- Describes the desired state for a configuration item.

- Declares the steps needed to bring that item to the desired state.

- Specifies a resource type such as package, template, or service.

- Lists additional details (also known as resource properties), as necessary.

- Are grouped into recipes, which describe working configurations.

73. What do you mean by a recipe in Chef?

For this answer, I will suggest you use the above-mentioned flow: first, define Recipe. A Recipe is a collection of Resources that describes a particular configuration or policy. A Recipe describes everything that is required to configure part of a system.

After the definition, explain the functions of Recipes by including the following points:

- Install and configure software components.

- Manage files.

- Deploy applications.

- Execute other recipes.

74. How does a Cookbook differ from a Recipe in a Chef’s?

You can simply say, “a Recipe is a collection of Resources, and primarily configures a software package or some piece of infrastructure. A Cookbook groups together Recipes and other information in a way that is more manageable than having just Recipes alone.”

75. What happens when you don’t specify a Resource’s action in Chef?

My suggestion is to first give a direct answer: when you don’t specify a resource’s action, Chef applies the default action.

Now explain this with an example, the below resource:

file ‘C:UsersAdministratorchef-reposettings.ini’ do

content ‘greeting=hello world’

end

is the same as the below resource:

file ‘C:UsersAdministratorchef-reposettings.ini’ do

action:create

content ‘greeting=hello world’

end

because: create is the file Resource’s default action.

76. What is the Ansible module?

Modules are considered to be the units of work in Ansible. Each module is mostly standalone and can be written in a standard scripting language such as Python, Perl, Ruby, bash, etc. One of the guiding properties of modules is idempotency, which means that even if an operation is repeated multiple times e.g. upon recovery from an outage, it will always place the system in the same state.

77. What are playbooks in Ansible?

Playbooks are Ansible’s configuration, deployment, and orchestration language. They can describe a policy you want your remote systems to enforce or a set of steps in a general IT process. Playbooks are designed to be human-readable and are developed in a basic text language.

At a basic level, playbooks can be used to manage configurations of and deployments to remote machines.

78. How do I see a list of all of the ansible_ variables?

Ansible by default gathers “facts” about the machines under management, and these facts can be accessed in Playbooks and in templates. To see a list of all of the facts that are available about a machine, you can run the “setup” module as an ad-hoc action:

Ansible -m setup hostname

This will print out a dictionary of all of the facts that are available for that particular host.

79. How can I set deployment orders for applications?

WebLogic Server 8.1 allows you to select the load order for applications. See the Application MBean Load Order attribute in the Application. WebLogic Server deploys server-level resources (first JDBC and then JMS) before deploying applications. Applications are deployed in this order: connectors, then EJBs, then Web Applications. If the application is an EAR, the individual components are loaded in the order in which they are declared in the application.xml deployment descriptor.

80. Can I refresh the static components of a deployed application without having to redeploy the entire application?

Yes, you can use weblogic.Deployer to specify a component and target a server, using the following syntax:

java weblogic.Deployer -adminurl http://admin:7001 -name appname -targets server1,server2 -deploy jsps/*.jsp

81. How do I turn the auto-deployment feature off?

The auto-deployment feature checks the applications folder every three seconds to determine whether there are any new applications or any changes to existing applications and then dynamically deploys these changes.

The auto-deployment feature is enabled for servers that run in development mode. To disable the auto-deployment feature, use one of the following methods to place servers in production mode:

- In the Administration Console, click the name of the domain in the left pane, then select the Production Mode checkbox in the right pane.

- At the command line, include the following argument when starting the domain’s Administration Server:

- -Dweblogic.ProductionModeEnabled=true

- Production mode is set for all WebLogic Server instances in a given domain.

82. When should I use the external_stage option?

Set -external_stage using weblogic.Deployer if you want to stage the application yourself, and prefer to copy it to its target by your own means.

Ansible and Puppet are two of the most popular configuration management tools among DevOps engineers.

83. What is the use of SSH?

Generally, SSH is used for connecting two computers and helps to work on them remotely. SSH is mostly used by the operations team as the operations team will be dealing with managing tasks with which they will require the admin system remotely. The developers will also be using SSH but comparatively less than the operations team as most of the time they will be working in the local systems. As we know, the DevOps development team and operation team will collaborate and work together. SSH will be used when the operations team faces any problem and needs some assistance from the development team then SSH is used.

84. Can you tell me something about Memcached?

Memcached is a Free & open-source, high-performance, distributed memory object caching system.

This is generally used in the management of memory in dynamic web applications by caching the data in RAM. This helps to reduce the frequency of fetching from external sources. This also helps in speeding up dynamic web applications by alleviating database load.

Conclusion: DevOps is a culture of collaboration between the Development team and operation team to work together to bring out an efficient and fast software product.

So these are a few top DevOps interview questions that are covered in this blog. This blog will be helpful to prepare for a DevOps interview.

85. Do you know about post-mortem meetings in DevOps?

By the name, we can say it is a type of meeting which is conducted at the end of the project. In this meeting, all the teams come together and discuss the failures in the current project. Finally, they will conclude how to avoid them and what measures need to be taken in the future to avoid these failures.

86. What does CAMS stand for in DevOps?

In DevOps, CAMS stands for Culture, Automation, Measurement, and Sharing.

- Culture: Culture is like a base of DevOps. It is implementing all the operations between the operations team and development team in a particular manner to make things comfortable for the completion of the software production.

- Automation: Automation is one of the key features of DevOps. It is used to reduce the time gap between the processes like testing and deployment. In the normal software development methods, we can see only one team will be working simultaneously. But in DevOps, we can see all the teams working together as there is an implementation of automation. All the changes made were reflected in the other teams to work on.

- Measurement: Measurement in the CAMS model of DevOps is related to measuring crucial factors in the software development process, which can indicate the overall performance of the team. Measuring factors such as income, costs, revenue, mean time between failures, etc. The most crucial aspect of Measurement is to pick the right metrics to track. At the same time, to push the team for better performance, one also needs to incentivize the correct metrics.

- Sharing: This Sharing Culture plays a key role in DevOps as this helps in sharing knowledge with other people on the team. This helps in the increase of people who know DevOps. This culture can be increased by involving Q and A sessions with teams regularly so that all the people will be giving their insight about the problem faced and it can be solved quickly by which we can gain the knowledge.

Continuous Monitoring Interview Questions

87. Why is Continuous monitoring necessary?

Continuous Monitoring allows timely identification of problems or weaknesses and quick corrective action that helps reduce the expenses of an organization. Continuous monitoring provides a solution that addresses three operational disciplines known as:

- continuous audit

- continuous controls monitoring

- continuous transaction inspection

88. What is Nagios?

Nagios is one of the monitoring tools. It is used for Continuous monitoring of systems, applications, services, business processes, etc in a DevOps culture. In the event of a failure, Nagios can alert technical staff of the problem, allowing them to begin remediation processes before outages affect business processes, end-users, or customers. With Nagios, you don’t have to explain why an unseen infrastructure outage affects your organization’s bottom line.

Now once you have defined what is Nagios, you can mention the various things that you can achieve using Nagios.

By using Nagios you can:

- Plan for infrastructure upgrades before outdated systems cause failures.

- Respond to issues at the first sign of a problem.

- Automatically fix problems when they are detected.

- Coordinate technical team responses.

- Ensure your organization’s SLAs are being met.

- Ensure IT infrastructure outages have a minimal effect on your organization’s bottom line.

- Monitor your entire infrastructure and business processes.

89. How do Nagios works?

Nagios runs on a server, usually as a daemon or service. Nagios periodically runs plugins residing on the same server, they contact hosts or servers on your network or on the internet. One can view the status information using the web interface. You can also receive email or SMS notifications if something happens.

The Nagios daemon behaves like a scheduler that runs certain scripts at certain moments. It stores the results of those scripts and will run other scripts if these results change.

Now expect a few questions on Nagios components like Plugins, NRPE, etc…

90. What are Plugins in Nagios?

Begin this answer by defining Plugins. They are scripts (Perl scripts, Shell scripts, etc.) that can run from a command line to check the status of a host or service. Nagios uses the results from Plugins to determine the current status of hosts and services on your network.

Once you have defined Plugins, explain why we need Plugins. Nagios will execute a Plugin whenever there is a need to check the status of a host or service. The plugin will perform the check and then simply returns the result to Nagios. Nagios will process the results that it receives from the Plugin and take the necessary actions.

91. What is NRPE (Nagios Remote Plugin Executor) in Nagios?

For this answer, give a brief definition of Plugins. The NRPE addon is designed to allow you to execute Nagios plugins on remote Linux/Unix machines. The main reason for doing this is to allow Nagios to monitor “local” resources (like CPU load, memory usage, etc.) on remote machines. Since these public resources are not usually exposed to external machines, an agent like NRPE must be installed on the remote Linux/Unix machines.

I will advise you to explain the NRPE architecture on the basis of the diagram shown below. The NRPE addon consists of two pieces:

- The check_nrpe plugin resides on the local monitoring machine.

- The NRPE daemon runs on the remote Linux/Unix machine.

There is an SSL (Secure Socket Layer) connection between the monitoring host and the remote host as shown in the diagram below.

92. What do you mean by passive check in Nagios?

The answer should start by explaining Passive checks. They are initiated and performed by external applications/processes and the Passive check results are submitted to Nagios for processing.

Then explain the need for passive checks. They are useful for monitoring services that are Asynchronous in nature and cannot be monitored effectively by polling their status on a regularly scheduled basis. They can also be used for monitoring services that are Located behind a firewall and cannot be checked actively from the monitoring host.

93. When Does Nagios Check for external commands?

Nagios check for external commands under the following conditions:

- At regular intervals specified by the command_check_interval option in the main configuration file or,

- Immediately after event handlers are executed. This is in addition to the regular cycle of external command checks and is done to provide immediate action if an event handler submits commands to Nagios.

94. What is the difference between Active and Passive checks in Nagios?

For this answer, first, point out the basic difference between Active and Passive checks. The major difference between Active and Passive checks is that Active checks are initiated and performed by Nagios, while passive checks are performed by external applications.

If your interviewer is looking unconvinced with the above explanation then you can also mention some key features of both Active and Passive checks:

Passive checks are useful for monitoring services that are:

- Asynchronous in nature and cannot be monitored effectively by polling their status on a regularly scheduled basis.

- Located behind a firewall and cannot be checked actively from the monitoring host.

The main features of Actives checks are as follows:

- Active checks are initiated by the Nagios process.

- Active checks are run on a regularly scheduled basis.

95. How does Nagios help with Distributed Monitoring?

The interviewer will be expecting an answer related to the distributed architecture of Nagios. So, I suggest that you answer it in the below-mentioned format:

With Nagios, you can monitor your whole enterprise by using a distributed monitoring scheme in which local slave instances of Nagios perform monitoring tasks and report the results back to a single master. You manage all configuration, notification, and reporting from the master, while the slaves do all the work. This design takes advantage of Nagios’s ability to utilize passive checks i.e. external applications or processes that send results back to Nagios. In a distributed configuration, these external applications are other instances of Nagios.

96. Explain the Main Configuration file of Nagios and its location?

First, mention what this main configuration file contains and its function. The main configuration file contains a number of directives that affect how the Nagios daemon operates. This config file is read by both the Nagios daemon and the CGIs (It specifies the location of your main configuration file).

Now you can tell where it is present and how it is created. A sample main configuration file is created in the base directory of the Nagios distribution when you run the configure script. The default name of the main configuration file is nagios.cfg. It is usually placed in the etc/ subdirectory of your Nagios installation (i.e. /usr/local/nagios/etc/).

97. Explain how Flap Detection works in Nagios.

I will advise you to first explain Flapping first. Flapping occurs when a service or host changes state too frequently, this causes a lot of problems and recovery notifications.

Once you have defined Flapping, explain how Nagios detects Flapping. Whenever Nagios checks the status of a host or service, it will check to see if it has started or stopped flapping. Nagios follows the below-given procedure to do that:

- Storing the results of the last 21 checks of the host or service analyzing the historical check results and determining where state changes/transitions occur

- Using the state transitions to determine a percent state change value (a measure of change) for the host or service

- Comparing the percent state change value against low and high flapping thresholds

A host or service is determined to have started flapping when its percent state change first exceeds a high flapping threshold. A host or service is determined to have stopped flapping when its percent state goes below a low flapping threshold.

98. What are the three main variables that affect recursion and inheritance in Nagios?

According to me the proper format for this answer should be:

First name the variables and then a small explanation of each of these variables:

- Name

- Use

- Register

Then give a brief explanation for each of these variables. A name is a placeholder that is used by other objects. Use defines the “parent” object whose properties should be used. The register can have a value of 0 (indicating it’s only a template) and 1 (an actual object). The register value is never inherited.

99. What is meant by saying Nagios is Object Oriented?

The answer to this question is pretty direct. I will answer this by saying, “One of the features of Nagios is object configuration format in that you can create object definitions that inherit properties from other object definitions and hence the name. This simplifies and clarifies relationships between various components.”

100. What is State Stalking in Nagios?

State Stalking is used for logging purposes. When Stalking is enabled for a particular host or service, Nagios will watch that host or service very carefully and log any changes it sees in the output of check results.

Depending on the discussion between you and the interviewer you can also add, “It can be very helpful in later analysis of the log files. Under normal circumstances, the result of a host or service check is only logged if the host or service has changed state since it was last checked.”

Containerization and Virtualization Interview Questions

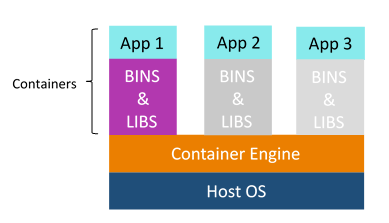

101. What are containers?

My suggestion is to explain the need for containerization first, containers are used to provide a consistent computing environment from a developer’s laptop to a test environment, from a staging environment into production.

Now give a definition of containers, a container consists of an entire runtime environment: an application, plus all its dependencies, libraries, other binaries, and configuration files needed to run it, bundled into one package. Containerizing the application platform and its dependencies remove the differences in OS distributions and underlying infrastructure.

102. What are the advantages that Containerization provides over virtualization?

Below are the advantages of containerization over virtualization:

- Containers provide real-time provisioning and scalability but VMs provide slow provisioning

- Containers are lightweight when compared to VMs

- VMs have limited performance when compared to containers

- Containers have better resource utilization compared to VMs

103. How exactly are containers (Docker in our case) different from hypervisor virtualization (vSphere)? What are the benefits?

Given below are some differences. Make sure you include these differences in your answer:

104. What is a Docker image?

The Docker image is the source of the Docker container. In other words, Docker images are used to create containers. Images are created with the build command, and they’ll produce a container when started with a run. Images are stored in a Docker registry such as registry.hub.docker.com because they can become quite large, images are designed to be composed of layers of other images, allowing a minimal amount of data to be sent when transferring images over the network.

Tip: Be aware of Dockerhub in order to answer questions on pre-available images.

105. What is a Docker container?

Docker containers include the application and all of its dependencies but share the kernel with other containers, running as isolated processes in user space on the host operating system. Docker containers are not tied to any specific infrastructure: they run on any computer, on any infrastructure, and in any cloud.

Now explain how to create a Docker container, Docker containers can be created by either creating a Docker image and then running it or you can use Docker images that are present on the Dockerhub.

Docker containers are basically runtime instances of Docker images.

106. What is Docker hub?

Docker hub is a cloud-based registry service that allows you to link to code repositories, build your images and test them, store manually pushed images, and link to Docker cloud so you can deploy images to your hosts. It provides a centralized resource for container image discovery, distribution and change management, user and team collaboration, and workflow automation throughout the development pipeline.

107. How is Docker different from other container technologies?

Docker containers are easy to deploy in the cloud. It can get more applications running on the same hardware than other technologies, it makes it easy for developers to quickly create, ready-to-run containerized applications and it makes managing and deploying applications much easier. You can even share containers with your applications.

If you have some more points to add you can do that but make sure the above explanation is there in your answer.

108. What is Docker Swarm?

Docker Swarm is native clustering for Docker which turns a pool of Docker hosts into a single, virtual Docker host. Docker Swarm serves the standard Docker API, any tool that already communicates with a Docker daemon can use Swarm to transparently scale to multiple hosts.

I will also suggest you include some supported tools:

- Dokku

- Docker Compose

- Docker Machine

- Jenkins

109. What is Dockerfile used for?

This answer according to me should begin by explaining the use of Dockerfile. Docker can build images automatically by reading the instructions from a Docker file.

Now I suggest you give a small definition of Dockerfle. A Dockerfile is a text document that contains all the commands a user could call on the command line to assemble an image. Using docker build users can create an automated build that executes several command-line instructions in succession.

Now expect a few questions to test your experience with Docker.

110. Can I use JSON instead of YAML for my compose file in Docker?

You can use json instead of yaml for your compose file, to use json file with compose, specify the filename to use for eg:

docker-compose -f docker-compose.json up

111. Tell us how you have used Docker in your past position?

Explain how you have used Docker to help rapid deployment. Explain how you have scripted Docker and used Docker with other tools like Puppet, Chef or Jenkins. If you have no past practical experience in Docker and have past experience with other tools in a similar space, be honest and explain the same. In this case, it makes sense if you can compare other tools to Docker in terms of functionality.

112. How to create Docker container?

I will suggest you give a direct answer to this. We can use Docker image to create a Docker container by using the below command:

docker run -t -i <image name> <command name>

This command will create and start the container.

You should also add If you want to check the list of all running containers with status on a host uses the below command:

docker ps -a

113. How to stop and restart the Docker container?

In order to stop the Docker container you can use the below command:

docker stop <container ID>

Now to restart the Docker container you can use:

docker restart <container ID>

114. How far do Docker containers scale?

Large web deployments like Google and Twitter, and platform providers such as Heroku and dotCloud all run on container technology, at a scale of hundreds of thousands or even millions of containers running in parallel.

115. What platforms does Docker run on?

Docker runs on only Linux and Cloud platforms and then I will mention the below vendors of Linux:

- Ubuntu 12.04, 13.04 et al

- Fedora 19/20+

- RHEL 6.5+

- CentOS 6+

- Gentoo

- ArchLinux

- openSUSE 12.3+

- CRUX 3.0+

Cloud:

- Amazon EC2

- Google Compute Engine

- Microsoft Azure

- Rackspace

Note that Docker does not run on Windows or Mac.

116. Do I lose my data when the Docker container exits?

You can answer this by saying, no I won’t lose my data when the Docker container exits. Any data that your application writes to disk gets preserved in its container until you explicitly delete the container. The file system for the container persists even after the container halts.

117. Differentiate between Continuous Deployment and Continuous Delivery?

| Continuous Deployment | Continuous Delivery |

| Deployment to production is automated | Deployment to production is manual |

| App deployment to production can be done, as soon as the code passes all the tests. There is no release day, in continuous Deployment | App deployment to production is done manually on a certain day, called “release day” |

118. Do I lose my data when the Docker container exits?